Thoughtful Friday #19: One Orchestrator to Rule them all

How Apache Airflow might be able to win the data orchestration race and become the only data orchestrator on the market.

I’m Sven, and this is Thoughtful Friday. We’re talking about how to build data companies, how to build great data-heavy products & tactics of high-performance data teams. I’ve also co-authored a book about the data mesh part of that.

Let’s dive in!

Time to Read: 8 minutes

The data orchestrator market is mainly open-source right now

Open source markets are network-effect markets

Newcomers in these markets pick a well-isolated subsegment and “sneak up from behind”

In the data markets, the newcomers pick a not-well isolated subsegment

🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮

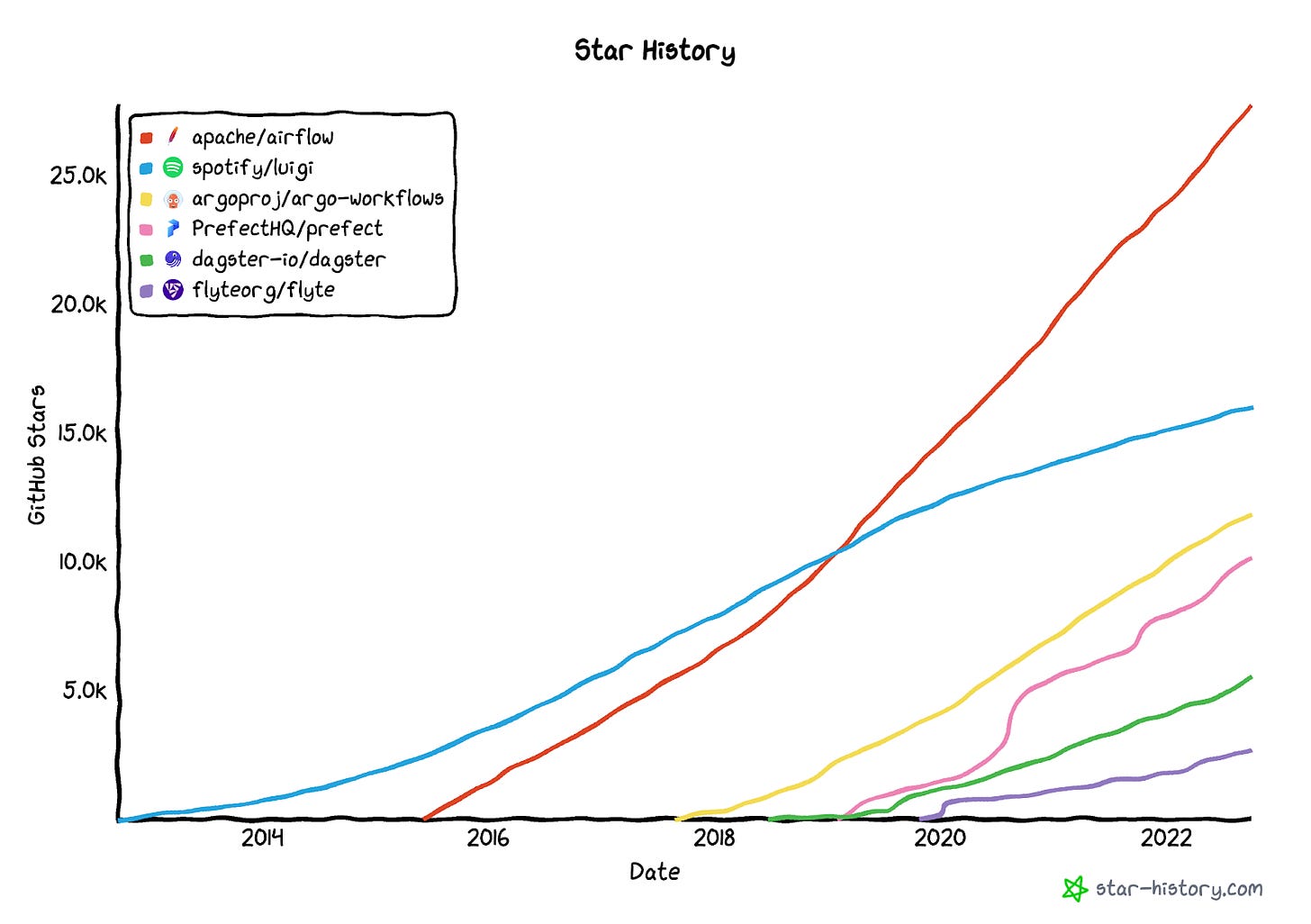

(Data orchestrators and their star rating on GitHub - a terrible metric for market share & activity, and the only one I have.)

… One orchestrator, to find them, one orchestrator to bind them all, and in data bind them; In the Land of Airflow where the shadows lie.

The (data) orchestrator world fascinates me. It’s a great place to study network effect-dominated markets and I’m looking forward to the next moves of the participants involved.

Today we’re going to study a few strategic plays available to the company that works in such markets, to get an idea of what might happen.

Idea: We’re going to discuss, how from a high-level, Apache Airflow might become the only orchestrator in the market, dominating 90% of it, even though new orchestrators seem to grow like weeds.

Note: GitHub stars are a terrible growth metric, but it’s all I have for the purpose of this article. We’re focusing on the data orchestrator market because all of the big orchestrators have open-source versions that actually run, mostly out of the box, in self-service. Thus the market is truly reliant on the open-source side of things.

We’re going to discuss five things:

First a primer on data orchestrators

Reminder of network effects and why they are so strong in these markets

How decline looks like in network effects markets (hint it looks like growth and is indistinguishable from sheer numbers)

What the newcomers' possible strategy is (yes they are doing that)

How the incumbents can fight it.

A primer on data orchestrators

Anna Geller @ prefect argues that “workflow orchestrator” or “data flow automation” better represents what orchestration in the era of the modern data stack is really about.

“Workflow orchestration means governing your data flow in a way that respects the orchestration rules and your business logic. A workflow orchestration tool allows you to turn any code into a workflow that you can schedule, run, and observe.

A good workflow orchestration tool will provide you with building blocks to connect to your existing data stack.” (Workflow Orchestration vs Data Orchestration)

One might notice, this is already quite a broad definition. I personally like to break down data orchestrators into two things: There is a need for some kind of “glue” between technological parts of data stacks. All of the data orchestrators “glue” things together into a structure that represents some form of a dependency graph, usually called a DAG.

Astasia Myers also distinguishes between two kinds of data orchestrators. The ones from the “old generation” are mostly “task aware”. These include Apache Airflow and Luigi.

The second-generation data orchestrators try to up the game by also becoming “data asset” aware. That means that they don’t just know about the task, but also about the data passed between tasks and therefore offer more value (in theory).

One major milestone in the data orchestrator world is probably the launch of a managed offering of Apache Airflow on AWS, IMHO a huge success for the adoption of the Airflow project (end of 2020, called MWAA). In addition, all hyper-clouds offer some kind of data orchestration integrated into their tooling available.

Why Network Effects Own Open Source Markets

The Linux foundation describes the network effects inside open-source projects rather well:

“Open Source projects exhibit natural increasing returns to scale. That’s because most developers are interested in using and participating in the largest projects, and the projects with the most developers are more likely to quickly fix bugs, add features and work reliably across the largest number of platforms. So, tracking the projects with the highest developer velocity can help illuminate promising areas in which to get involved, and what are likely to be the successful platforms over the next several years.” (What do most successful open source projects have in common)

They highlight two effects:

Users of open source software are interested in the “large projects”

Contributors on open source projects flock to the “largest projects”

In a graphic, it is described as this:

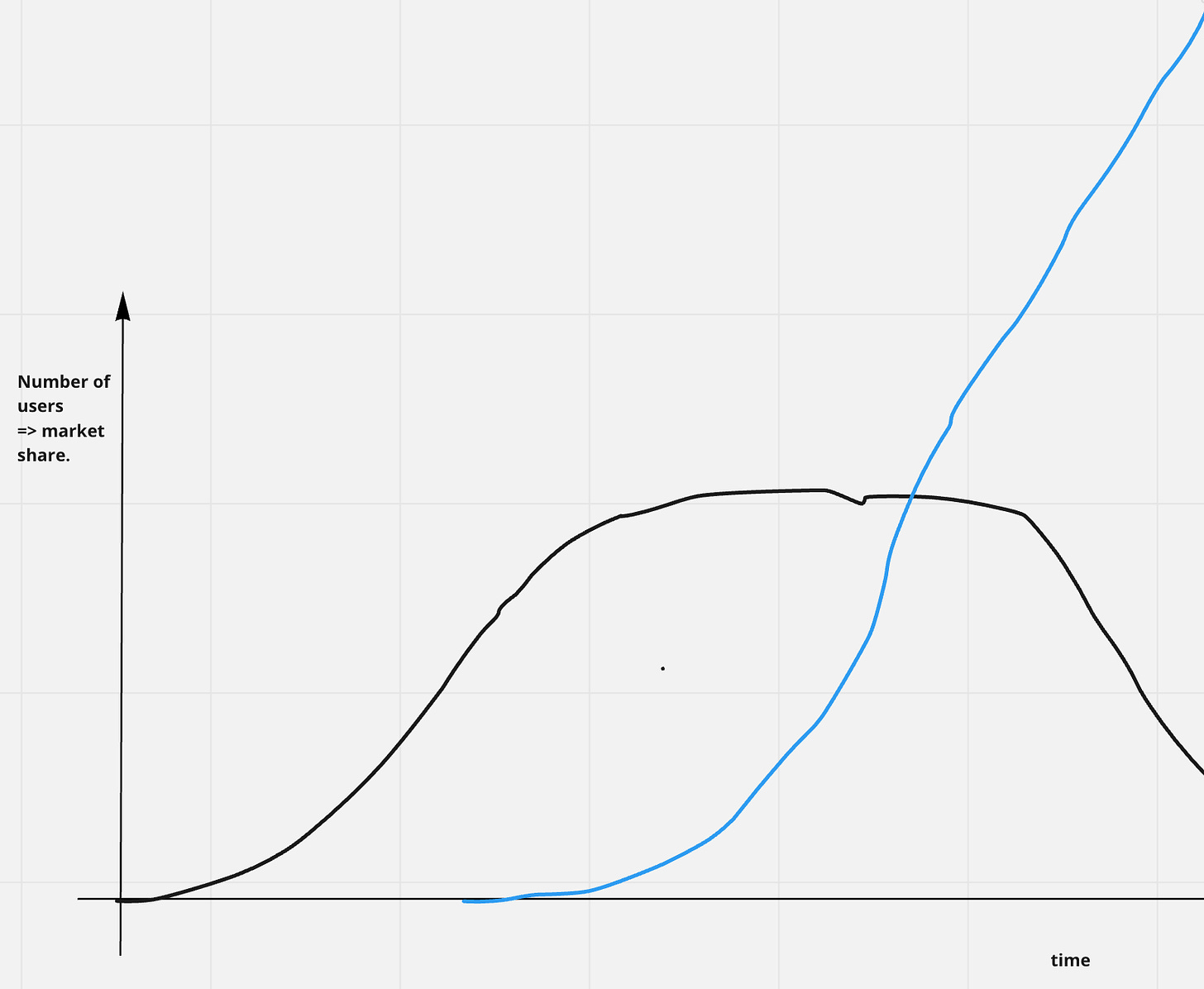

Where “large” is apparently some combination of users and contributions, in both cases! This implies the long-run history in any open source market will look like the chart below, a lot of projects pick up speed and lose it again, however, one will prevail and pick up all the speed, accelerating to capture 90%+ of the market.

Decline in Network Effect Dominated Markets

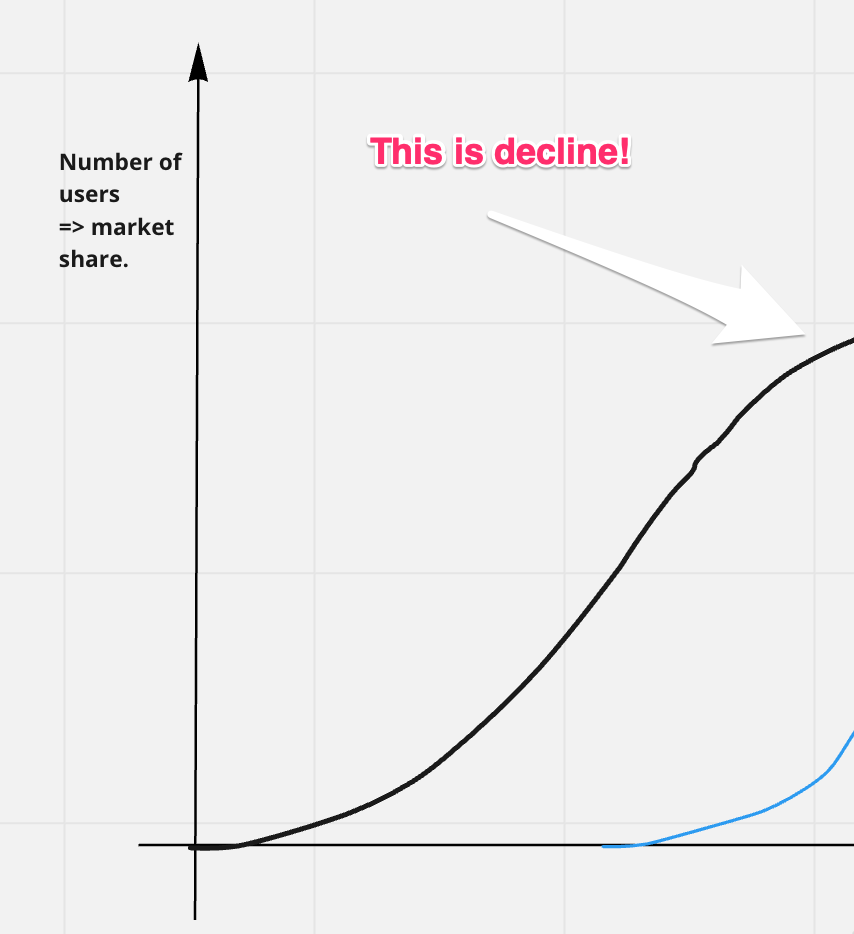

I feel the decline in network effect-dominated markets is harder to spot than in other markets, because of two factors:

It still looks like growth

It is indistinguishable from a “short break in acceleration”

A unit in a network effect market declines, once any other unit picks up the growth. Once users & contributors flock to a different open-source project, the decline starts. The problem is, this might still mean the incumbent unit is growing! The growth rate is just shrinking. It means, the decline looks like this:

As a result of this decline, almost inevitably, the situation will play out like this:

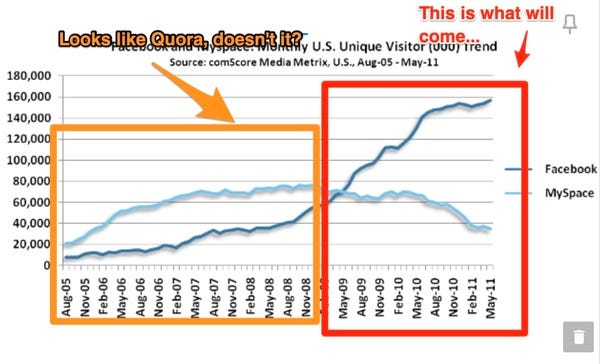

This is almost exactly the same as the “mySpace vs. Facebook growth chart”, check out one of my old newsletters to see that in action where I discussed why the platform Quora will likely vanish.

However, without knowing that users flock from A -> B, the first picture is meaningless. And that’s the problem, in these markets it's hard to tell what is declining and what is growing.

How do newcomers break into network-effect markets?

If network effects are so strong, how do newcomers break into markets where incumbents “only get stronger”? My observation is, they usually utilize the observation above, “sneak up from behind” and use the fact that the incumbent won’t notice the decline until it’s too late.

They do so by picking a market segment, a small piece of the market, which is sufficiently well isolated to utilize network effects themselves.

Example: MySpace had built up a heavy network. But facebook managed to focus on a very small segment, college students (from one university!). They then rolled out university by university, each time having close to 100% saturation and thus massive network effects. Since mySpace did not focus on this group, they weren’t able to stop Facebook, and they probably did not realize what Facebook was doing. Using a sufficiently large group of college students as Facebook users, they then leveraged into different pieces of the market, each time leveraging network effects and the already existing user base. Only after going over the “critical point” to really play out the network effects did facebook change to the so-called “sprinkler strategy” and rolled out into multiple countries simultaneously.

(Facebook's decline. “Why Quora will die”)

If you look at the data orchestrator markets, it’s exactly what is happening. The idea of “generation 2” data orchestrators is just that, they are trying to focus on a market segment.

There is a second option available in open source markets, that isn’t available elsewhere: It is to carry the competition to a non-open source market. That strategic move is available to both incumbents and newcomers, but we’re not seeing major shifts in that direction currently.

What the incumbent Airflow can do

Facebook could “sneak by mySpace” until it was too late because they chose well-isolated market segments. DuckDuckGo still exists, because Google doesn’t want to go there, not because they can’t. Google can leverage its massive accumulated network effects and provide a better DuckDuckGo-like experience for less cost with better marketing, but they don’t, right now.

The incumbent in a network effect-dominated market is always in a much better place to penetrate these market segments if they choose to. MySpace could’ve rolled out a college student-targeted offering and would’ve simply killed facebook in its infancy because mySpace did control such a large portion of the market. But they didn’t.

The situation in the data orchestration markets is different though. The chosen market segment IMHO is not well isolated, not as well as college students. The “data aware” capabilities, as well as practices, aren’t yet sufficiently developed to really make a dent in the preferences of the consumers. The big newcomers, prefect and dagster effectively both set out to “build a better Airflow” (my words, not theirs). This puts them in close vicinity of airflow, not in a well-isolated segment of the market.

So what can the incumbent, Apache Airflow do? Since Apache Airflow has amassed the biggest network effects, it could utilize them to just roll over this market segment. A typical conversation between data engineers that illustrates this idea goes like this:

Dagster fan: “Hey, can you help me build this runner for dagster? I need to customize it to adapt to our setup”.

Senior Data Engineer: “Why are you doing this in dagster? You have to hand code 10 runners & connections… You do know Airflow already has them by default, right?”

Airflow has mass, breadth, and integrations. It could probably just develop similar capabilities to dagster & prefect, possibly even as variations to airflow. These variations would have better connectivity, more contributors, and likely very quickly a larger user base than prefect and dagster together, effectively stealing this segment and thus the market.

Airflow could also provide a better extension framework, and provide some form of platform/marketplace for others to develop and profit from these kinds of variations.

Airflow can and should leverage its network effects, not just its dominance, and play this one out until it is too late. Because sooner or later, dagster & prefect or any other newcomer will find a properly isolated market segment and start to sneak up until it is too late.

Summary

But who knows? This exercise is mainly meant to study strategic plays in network effect-dominated markets, and this market makes a particularly good example. To make a good prediction and give true good advice, you will need better assumptions on the actual activity numbers of these projects as well as a better market analysis on the chosen positions & segments.

I hope this still helps to understand these kinds of markets.

What did you think of this edition?

-🐰🐰🐰🐰🐰 I love it, will forward!

-🐰 It is terrible ( = I just made it to this link b.c. I was looking for the unsubscribe button)

Want to recommend this or have this post public?

This newsletter isn’t a secret society, it’s just in private mode… You may still recommend & forward it to others. Just send me their e-mail, ping me on Twitter/LinkedIn and I’ll add them to the list.

If you really want to share one post in the open, again, just poke me and I’ll likely publish it on medium as well so you can share it with the world.

I know you acknowledge that GitHub stars are not a great metric. I think public open-source metrics are one thing, but they serve only as a proxy at best. Stars, for example, can be bought and also represent a one-time interaction. I really good metric that's difficult to surface are active, recurring users and community engagement

If you're looking for a topic for a future, that would be one I'm interested in :-)