Thoughtful Friday #15: State Data Mesh Tooling

I’m Sven, and this is Thoughtful Friday. The email that helps you understand and shape the one thing that will power the future: data. I’m also writing a book about the data mesh part of that.

Let’s dive in!

Time to Read: 7 min

We have no tools for the data mesh

We need special-purpose tools for the data mesh

There are two good criteria for evaluating tools for an “ok” fit for a data mesh

🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮🔮

I got pocked lately to think about the state of data mesh tooling from my perspective.

Usually, when I come to that point in a talk, I have a line that goes something like this:

“The technological part isn’t the hard part, the organization & social part is. BUT because we don’t have special purpose tools yet, the tech part still isn’t fun at all. We have to repurpose tools made for something different, inevitably resulting in careful work and extra effort.”

That makes it sound like everything about the data mesh is hard work, so let’s pick the technological side apart. This isn’t going to be a tool list, instead, I’ll share three meta-thoughts on the state of data mesh tooling which you can use to evaluate the space yourself. Be that to launch a start-up for new tools, for writing technical articles on it, or to pick the tools you want to use yourself.

I have three generic thoughts which I’ll dive into detail below:

We do need special-purpose tools for stuff! Pipelines and CI/CD systems are awesome. Without them, there is no DevOps is there?

We’re still missing a convergence on the general way of developing data apps, like the pipeline concept for software development.

We’re still missing both a standard(!) protocol & abstraction layer on top of data apps akin to the HTTP/REST layer. We don’t really have anything for decentralized data systems and their communication.

1. On the importance of special-purpose tools

As a kid, I used to hammer in screws with a hammer. Until a small shed, I built collapsed.

Key point: there are special-purpose tools, and they usually do a great job.

That is to say, special-purpose tools usually have their place. But for the data mesh, special purpose tools are even more critical.

Let’s look for an analogy to the DevOps paradigm shift. The DevOps paradigm shift basically is a huge socio-technical shift from a centralized Ops perspective where one central team uses centralized tooling to operate software to a decentralized DevOps perspective where each individual Dev team also takes on the Ops side. For that, they usually use more decentralized tooling, either shared or an individual one.

But since this shift is one in both the socio-side of things, meaning people and processes, as well as on the technical side, meaning tools, it’s a pretty big undertaking.

To make this work, the DevOps world has special-purpose tools, in particular CI/CD tooling with engrained concepts like a “pipeline”. These help to multiply best practices even in a decentralized world. No matter what CI/CD tool a team chooses, the concept of a pipeline, stages, jobs, and testing will be present.

The point is, that the data mesh world doesn’t have a single special-purpose tool yet but is in dire need of multiple ones.

2. On the importance of central concepts

The DevOps world or more generally the software engineering world has great special-purpose tools, precisely because it has converged on the idea of pipelines. It has converged on several steps necessary to turn a piece of code into a running software system.

Every CI/CD tool allows for stages with several steps, every tool has an isolation of environments, can run pipelines in parallel, etc. The concepts are pretty tight.

And yet we are missing convergence on similar concepts inside the data world. Sure, the software side of things falls right into the same concept, but the data side does not, and certainly not the intersection of data and code. Because only at the intersection of a data pipeline and a software pipeline is true value created for any data-heavy application.

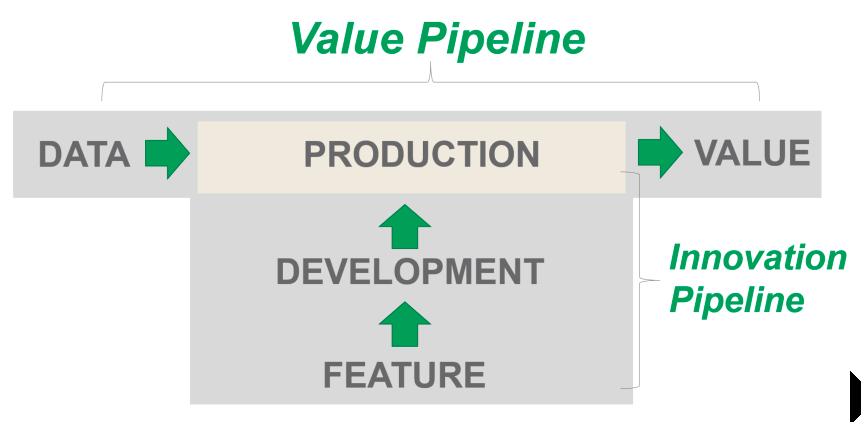

One candidate for a central concept I favor is the idea of two pipelines, one software/innovation pipeline, and one data pipeline, as often illustrated by datakitchen.io and Chris Bergh:

(Work Without Fear Or Heroism - datakitchen.io)

That looks simple and easy, but it isn’t implemented as a central concept in any tool I know. More important, we don’t have any convergence on this concept at all, we have few best practices following these ideas, and we don’t yet know whether we want to “trace” data, “observe” it, or “version” it.

We also aren’t very clear on following the implications of this. If the value is only created on the intersection of these two pipelines, for instance, it should be ok to do something that might speed up the real-timeliness of data a lot in favor of reducing the code quality a tiny bit. Yet this seems to be a topic not even discussed in the data community.

Key point: Software engineers have pipelines and lots of related concepts. Data people don’t have much convergence on anything, no best practices surrounding it, and definitively no concepts built into tools.

3. Zoom out to the decentralized world

After lots of grim words, let’s take a look at what we do have: We do have a lot of tools, right? And we know we want to choose tools, that are more on the side of encouraging best practices to make our socio-technical shift a bit easier.

Key point: You can build a data mesh with any tool. You can hammer in screws. But some tools will make your construction more robust due to the socio-technical nature of things.

The data mesh isn’t just made up of the idea of data, it also focuses on

communication between nodes of the mesh

the decentralized/distributed aspect of the mesh.

So what kinds of tools help us in this situation? I like to compare this to the crypto world, where Bitcoin as a protocol level allowed the communication between nodes by standardizing onto a protocol, and Ethereum with its smart contracts act as a kind of abstraction layer allowing to build high-level stuff on top of the communication.

So two kinds of tools should help us in the data mesh world:

tools building standardization on the communication level

tools providing abstractions & allowing for higher-level constructs

Sidebar: In the software engineering world, we have HTTP, REST, and Event sourcing as such layers. But as we all know, these do not work in the data world where fundamental assumptions on the underlying protocol are broken - esp. About the size of things and the operations, we’d like to perform.

With these two ideas in mind, a lot of tools should come to the top of your mind: Trino (prestoDB) creates a standardization by providing a unified SQL interface.

Table formats like Apache Iceberg, Hudi, and Deltalake provide great abstraction layers and thus allow for higher-level constructs.

The whole Kafka ecosystem manages to be both, a protocol for communication as well as an abstraction making it a serious candidate for data mesh implementations. As you might know, this comprehensive scope also makes Kafka pretty hard to digest.

On the other hand, I’m still very biased toward data catalogs. Simply because all data catalogs I’ve seen so far have been built for a pre-decentralized data world and as such by default become a hammer for a lot of screws.

As you should by now be used to, I don’t have a comprehensive list of tools, I can just provide these thoughts to help you evaluate possible tools, options, and business opportunities.

You’re welcome to share your thoughts with me!

So, how did you like this Thoughtful Friday?

It is terrible | It’s pretty bad | average newsletter... | good content... | I love it, will forward!